Latest News

-

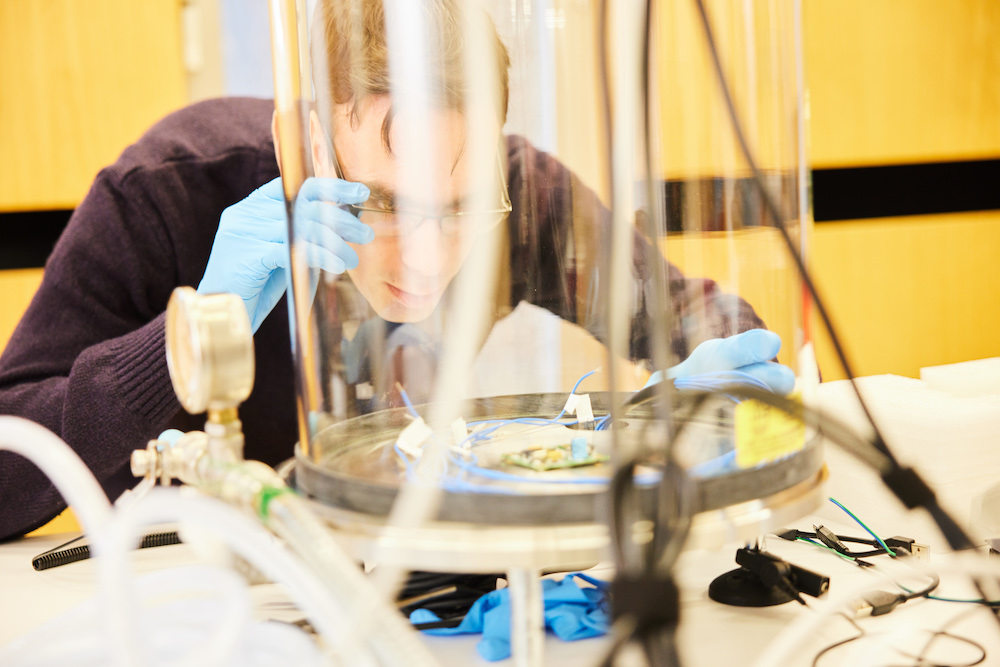

Boost for autonomous systems research at Uni’s SnT

Press Releases, ResearchComputer Science & ICTLearn more -

Events

Partner with SnT

Access leading research and insight

Launched in 2011, our Partnership Programme was created to support public entities and companies of all sizes to achieve their innovation and optimisation goals, all while providing our researchers with real-world challenges to solve.

Today, our roster has expanded to include 60+ actors from a variety of sectors, including FinTech and space. We work with both public and private organisations in Luxembourg and around the world to ensure our research has socioeconomic impact.

SnT in Numbers

-

505+Staff

-

18Research Groups

-

6Spin-offs